MiniMax M2 DWQ

Collection

2 items • Updated • 1

How to use catalystsec/MiniMax-M2-4bit-DWQ with MLX:

# Make sure mlx-lm is installed

# pip install --upgrade mlx-lm

# Generate text with mlx-lm

from mlx_lm import load, generate

model, tokenizer = load("catalystsec/MiniMax-M2-4bit-DWQ")

prompt = "Write a story about Einstein"

messages = [{"role": "user", "content": prompt}]

prompt = tokenizer.apply_chat_template(

messages, add_generation_prompt=True

)

text = generate(model, tokenizer, prompt=prompt, verbose=True)How to use catalystsec/MiniMax-M2-4bit-DWQ with Pi:

# Install MLX LM: uv tool install mlx-lm # Start a local OpenAI-compatible server: mlx_lm.server --model "catalystsec/MiniMax-M2-4bit-DWQ"

# Install Pi:

npm install -g @mariozechner/pi-coding-agent

# Add to ~/.pi/agent/models.json:

{

"providers": {

"mlx-lm": {

"baseUrl": "http://localhost:8080/v1",

"api": "openai-completions",

"apiKey": "none",

"models": [

{

"id": "catalystsec/MiniMax-M2-4bit-DWQ"

}

]

}

}

}# Start Pi in your project directory: pi

How to use catalystsec/MiniMax-M2-4bit-DWQ with Hermes Agent:

# Install MLX LM: uv tool install mlx-lm # Start a local OpenAI-compatible server: mlx_lm.server --model "catalystsec/MiniMax-M2-4bit-DWQ"

# Install Hermes: curl -fsSL https://hermes-agent.nousresearch.com/install.sh | bash hermes setup # Point Hermes at the local server: hermes config set model.provider custom hermes config set model.base_url http://127.0.0.1:8080/v1 hermes config set model.default catalystsec/MiniMax-M2-4bit-DWQ

hermes

How to use catalystsec/MiniMax-M2-4bit-DWQ with MLX LM:

# Install MLX LM uv tool install mlx-lm # Interactive chat REPL mlx_lm.chat --model "catalystsec/MiniMax-M2-4bit-DWQ"

# Install MLX LM

uv tool install mlx-lm

# Start the server

mlx_lm.server --model "catalystsec/MiniMax-M2-4bit-DWQ"

# Calling the OpenAI-compatible server with curl

curl -X POST "http://localhost:8000/v1/chat/completions" \

-H "Content-Type: application/json" \

--data '{

"model": "catalystsec/MiniMax-M2-4bit-DWQ",

"messages": [

{"role": "user", "content": "Hello"}

]

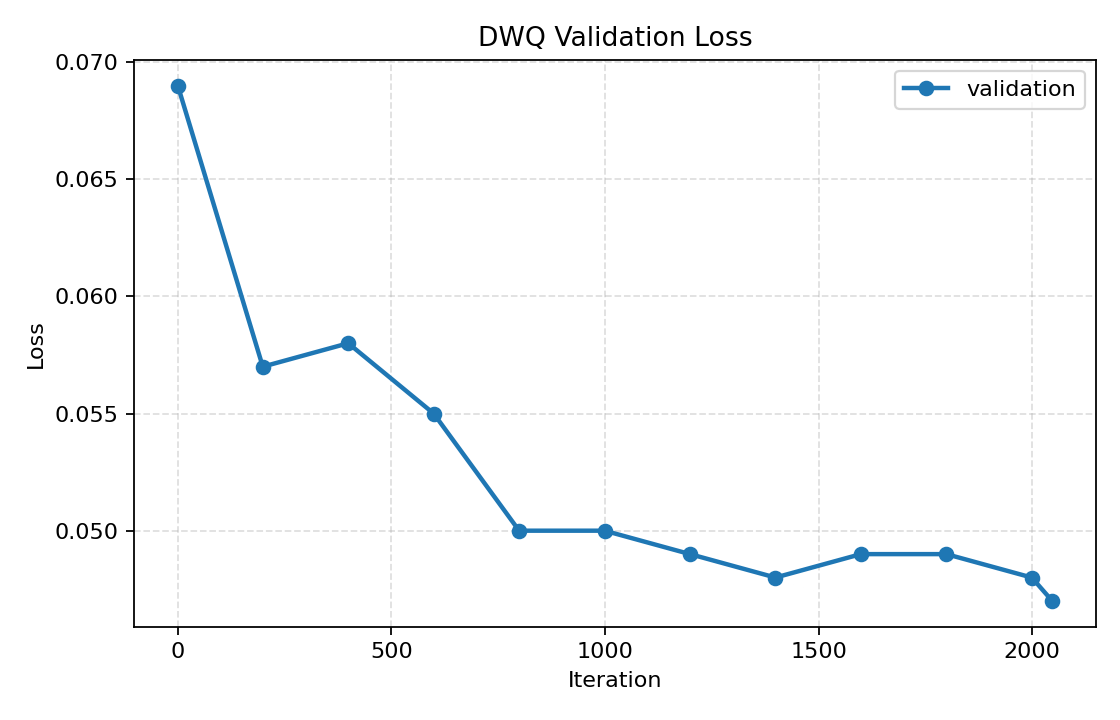

}'This model was quantized to 4-bit using DWQ with mlx-lm version 0.28.4.

| Parameter | Value |

|---|---|

| DWQ learning rate | 3e-7 |

| Batch size | 1 |

| Dataset | allenai/tulu-3-sft-mixture |

| Initial validation loss | 0.069 |

| Final validation loss | 0.047 |

| Relative KL reduction | ≈32 % |

| Tokens processed | ≈1.09 M |

pip install mlx-lm

from mlx_lm import load, generate

model, tokenizer = load("catalystsec/MiniMax-M2-4bit-DWQ")

prompt = "hello"

if tokenizer.chat_template is not None:

prompt = tokenizer.apply_chat_template(

[{"role": "user", "content": prompt}],

add_generation_prompt=True,

)

response = generate(model, tokenizer, prompt=prompt, verbose=True)

print(response)

4-bit

Base model

MiniMaxAI/MiniMax-M2