Upload folder using huggingface_hub

Browse files- README.md +107 -157

- images/s2tt-covost-bleu.png +0 -0

- images/s2tt-covost-xcomet.png +0 -0

- images/s2tt-fleurs-bleu.png +0 -0

- images/s2tt-fleurs-xcomet.png +0 -0

- images/s2tt-mintzai-bleu.png +0 -0

- images/s2tt-mintzai-xcomet.png +0 -0

README.md

CHANGED

|

@@ -15,7 +15,6 @@ base_model:

|

|

| 15 |

- BSC-LT/salamandraTA-7b-instruct

|

| 16 |

---

|

| 17 |

|

| 18 |

-

|

| 19 |

<!--  -->

|

| 20 |

|

| 21 |

# SalamandraTAV-7b Model Card

|

|

@@ -59,7 +58,7 @@ The model is intended for both research and commercial use for the speech to tex

|

|

| 59 |

|

| 60 |

### Training Framework

|

| 61 |

|

| 62 |

-

The code used to train SalamandraTAV-7b is based on the [Transformers](https://huggingface.co/docs/transformers/) library, and

|

| 63 |

|

| 64 |

### Compute Infrastructure

|

| 65 |

|

|

@@ -84,7 +83,6 @@ Training was conducted on 4 nodes, each with the following specifications:

|

|

| 84 |

The easiest way to use the model is using the custom pipeline `multimodal_mt`:

|

| 85 |

```python

|

| 86 |

from transformers import pipeline

|

| 87 |

-

|

| 88 |

pipe = pipeline(

|

| 89 |

task="multimodal_mt",

|

| 90 |

model="langtech-veu/salamandra-TAV-7b",

|

|

@@ -122,14 +120,12 @@ translation = pipe(audio_path, mode="s2tt", src_lang="English", tgt_lang="Spanis

|

|

| 122 |

Run the S2TT pipeline, specifying the target language:

|

| 123 |

```python

|

| 124 |

transcription = pipe(audio_path, mode="asr", **generation_kwargs)

|

| 125 |

-

|

| 126 |

translation = pipe(transcription, mode="t2tt", tgt_lang="Spanish", **generation_kwargs)

|

| 127 |

```

|

| 128 |

|

| 129 |

Optionally, you can also specify the source language:

|

| 130 |

```python

|

| 131 |

transcription = pipe(audio_path, mode="asr", src_lang="English", **generation_kwargs)

|

| 132 |

-

|

| 133 |

translation = pipe(transcription, mode="t2tt", src_lang="English", tgt_lang="Spanish", **generation_kwargs)

|

| 134 |

```

|

| 135 |

|

|

@@ -140,14 +136,12 @@ This is a variant which uses a CoT mechanism to generate the translation by tran

|

|

| 140 |

Run the S2TT pipeline, specifying the target language:

|

| 141 |

```python

|

| 142 |

history = pipe(audio_path, return_chat_history=True, mode="asr", **generation_kwargs)

|

| 143 |

-

|

| 144 |

translation = pipe(history, mode="t2tt", tgt_lang="Spanish", **generation_kwargs)

|

| 145 |

```

|

| 146 |

|

| 147 |

Optionally, you can also specify the source language:

|

| 148 |

```python

|

| 149 |

history = pipe(audio_path, return_chat_history=True, mode="asr", src_lang="English", **generation_kwargs)

|

| 150 |

-

|

| 151 |

translation = pipe(history, mode="t2tt", src_lang="English", tgt_lang="Spanish", **generation_kwargs)

|

| 152 |

```

|

| 153 |

|

|

@@ -156,15 +150,23 @@ translation = pipe(history, mode="t2tt", src_lang="English", tgt_lang="Spanish",

|

|

| 156 |

If you are interested in getting the intermediate results, you can do it as follows:

|

| 157 |

```python

|

| 158 |

history = pipe(audio_path, return_chat_history=True, mode="asr", **generation_kwargs)

|

| 159 |

-

|

| 160 |

history = pipe(history, return_chat_history=True, mode="t2tt", tgt_lang="Spanish", **generation_kwargs)

|

| 161 |

-

|

| 162 |

-

|

|

|

|

| 163 |

```

|

| 164 |

|

| 165 |

|

| 166 |

## Training Data

|

| 167 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 168 |

|

| 169 |

### Automatic Speech Recognition Data

|

| 170 |

| Dataset | ast | ca | en | es | eu | gl | oc | pt | Total |

|

|

@@ -183,39 +185,35 @@ print(history.get_assistant_messages())

|

|

| 183 |

| Total (hours) | 0.5h |2010.5h|6729.5h|2497.5h| 544h | 181h | 0.5h | 184h |12147.5h|

|

| 184 |

|

| 185 |

|

| 186 |

-

|

| 187 |

-

|

| 188 |

### Speech-To-Text Translation

|

| 189 |

|

| 190 |

-

|

| 191 |

-

> [!NOTE] Note that source audios are shared over all the target languages.

|

| 192 |

-

|

| 193 |

-

| Common Voice Corpus 21.0 - SynthS2TT | ast | ca | en | es | eu | gl | oc | pt | Total (tgt) |

|

| 194 |

-

|:-------------------------------------|:------|:------|:------|:------|:------|:------|:------|:------|:------|

|

| 195 |

-

| **ast** | - | 13min | 19min | 16min | 7min | 12min | 9min | 17min | |

|

| 196 |

-

| **ca** | | - | | | | | | | |

|

| 197 |

-

| **en** | | | - | | | | | | |

|

| 198 |

-

| **es** | | | | - | | | | | |

|

| 199 |

-

| **eu** | | | | | - | | | | |

|

| 200 |

-

| **gl** | | | | | | - | | | |

|

| 201 |

-

| **oc** | | | | | | | - | | |

|

| 202 |

-

| **pt** | | | | | | | | - | |

|

| 203 |

-

| **Total (src)** | | | | | | | | | - |

|

| 204 |

-

|

| 205 |

-

| Other Datasets | ca-en | en-ca | en-es | en-pt | es-en | es-pt | pt-en | pt-es | Total |

|

| 206 |

|:----------------------------------------------------------------------------------------------------|:------|:------|:------|:------|:------|:------|:------|:------|:------|

|

| 207 |

| [CoVoST 2](https://github.com/facebookresearch/covost/) (train) | 135.5h| 430h | | | 113h | | 10.5h | | 689h |

|

| 208 |

| [Europarl-ST v1.1](https://www.mllp.upv.es/europarl-st/) (train) | | | 75.5h | 74h | 20.5h | 12.5h | 14.5h | 9.5h | 207h |

|

| 209 |

-

| Total (hours) |

|

| 210 |

-

|

| 211 |

|

| 212 |

-

|

| 213 |

|

| 214 |

### Text-To-Text Translation

|

| 215 |

|

| 216 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 217 |

|

| 218 |

-

|

| 219 |

|

| 220 |

|

| 221 |

|

|

@@ -272,23 +270,17 @@ transformation = jiwer.Compose([

|

|

| 272 |

|

| 273 |

| SalamandraTAV | ast | ca | en | es | gl | pt | Avg (tgt) |

|

| 274 |

|:--------------|-----------:|----------:|----------:|----------:|-----------:|-------------:|----------:|

|

| 275 |

-

| **ast** | - | **26.

|

| 276 |

-

| **ca** | **

|

| 277 |

-

| **en** | **

|

| 278 |

-

| **es** | **13.

|

| 279 |

-

| **gl** | **

|

| 280 |

-

| **pt** | **

|

| 281 |

-

| **Avg (src)** | **

|

| 282 |

-

|

| 283 |

-

|

| 284 |

-

|

| 285 |

-

|

| 286 |

-

| **ca** | - | - | **40.0** | 20.9 | 26.1 | 27.0 | 28.5 |

|

| 287 |

-

| **en** | - | 36.7 | - | 23.7 | 29.7 | **43.0** | **33.3** |

|

| 288 |

-

| **es** | - | 16.8 | **25.4** | - | 15.3 | 14.4 | 18.0 |

|

| 289 |

-

| **gl** | - | 24.0 | **34.6** | 19.4 | - | 23.0 | **25.3** |

|

| 290 |

-

| **pt** | - | 19.3 | **38.4** | 13.3 | 15.8 | - | 21.7 |

|

| 291 |

-

| **Avg (src)** | - | 22.9 | **33.0** | 18.0 | 20.5 | 24.4 | 23.8 |

|

| 292 |

|

| 293 |

The evaluation results reported here were obtained by manually running inference with the model on the test set. We verified that the results from SeamlessM4T are consistent with the [official results](https://huggingface.co/facebook/seamless-m4t-v2-large) reported by the authors.

|

| 294 |

|

|

@@ -296,23 +288,18 @@ The evaluation results reported here were obtained by manually running inference

|

|

| 296 |

**XCOMET-XL**

|

| 297 |

|

| 298 |

|

| 299 |

-

| SalamandraTAV | ca | en

|

| 300 |

-

|

| 301 |

-

| **ca** | - | 0.

|

| 302 |

-

| **en** | **0.

|

| 303 |

-

| **es** | **0.

|

| 304 |

-

| **gl** | **0.

|

| 305 |

-

| **pt** | **0.

|

| 306 |

-

| **Avg (src)** | **0.

|

| 307 |

-

|

| 308 |

-

|

| 309 |

-

|

| 310 |

-

|

| 311 |

-

| **en** | 0.8837 | - | 0.8941 | 0.8878 | 0.8982 | 0.8910 |

|

| 312 |

-

| **es** | 0.8523 | **0.9132** | - | 0.8754 | 0.8431 | 0.8710 |

|

| 313 |

-

| **gl** | 0.8617 | **0.9238** | 0.8775 | - | 0.8743 | 0.8843 |

|

| 314 |

-

| **pt** | 0.7687 | **0.9068** | 0.7983 | 0.8207 | - | 0.8236 |

|

| 315 |

-

| **Avg (src)** | 0.8416 | **0.9195** | 0.8685 | 0.8721 | 0.8758 | 0.8755 |

|

| 316 |

|

| 317 |

XCOMET-XL results for Asturian are not reported because it is not supported by this metric.

|

| 318 |

|

|

@@ -327,37 +314,29 @@ XCOMET-XL results for Asturian are not reported because it is not supported by t

|

|

| 327 |

|

| 328 |

| SalamandraTAV | ca | en |

|

| 329 |

|:--------------|----------:|----------:|

|

| 330 |

-

| **ca** | - | **37.

|

| 331 |

| **en** | 41.1 | - |

|

| 332 |

| **es** | - | **43.9** |

|

| 333 |

-

| **pt** | - |

|

| 334 |

-

| **Avg (src)**

|

| 335 |

|

| 336 |

-

|

| 337 |

-

|

| 338 |

-

|

| 339 |

-

| **en** | **44.0** | - |

|

| 340 |

-

| **es** | - | 42.2 |

|

| 341 |

-

| **pt** | - | **54.1** |

|

| 342 |

-

| **Avg (src)** | - | **44.4** |

|

| 343 |

|

| 344 |

**XCOMET-XL**

|

| 345 |

|

| 346 |

| SalamandraTAV | ca | en |

|

| 347 |

|:--------------|----------:|----------:|

|

| 348 |

-

| **ca** | - | 0.

|

| 349 |

-

| **en** | 0.

|

| 350 |

| **es** | - | 0.9241 |

|

| 351 |

-

| **pt** | - | 0.

|

| 352 |

-

| **Avg (src)** |

|

|

|

|

|

|

|

| 353 |

|

| 354 |

-

|

| 355 |

-

|:--------------|----------:|-----------:|

|

| 356 |

-

| **ca** | - | **0.8901** |

|

| 357 |

-

| **en** | **0.9086**| - |

|

| 358 |

-

| **es** | - | **0.9383** |

|

| 359 |

-

| **pt** | - | **0.9530** |

|

| 360 |

-

| **Avg (src)** | - | **0.9271** |

|

| 361 |

|

| 362 |

</details>

|

| 363 |

|

|

@@ -372,25 +351,23 @@ Mintzai-ST has overlap with basque_parliament_1, with which we have trained our

|

|

| 372 |

|

| 373 |

| SalamandraTAV | es | eu |

|

| 374 |

|:--------------|----------:|----------:|

|

| 375 |

-

| **es** | - |

|

| 376 |

-

| **eu** | **

|

| 377 |

|

| 378 |

-

|

| 379 |

-

|

| 380 |

-

|

| 381 |

-

| **eu** | 21.0 | - |

|

| 382 |

|

| 383 |

**XCOMET-XL**

|

| 384 |

|

| 385 |

| SalamandraTAV | es | eu |

|

| 386 |

|:--------------|----------:|----------:|

|

| 387 |

-

| **es** | - | **0.

|

| 388 |

-

| **eu** | **0.

|

| 389 |

|

| 390 |

-

|

| 391 |

-

|

| 392 |

-

|

| 393 |

-

| **eu** | 0.7185 | - |

|

| 394 |

|

| 395 |

</details>

|

| 396 |

|

|

@@ -403,22 +380,14 @@ Mintzai-ST has overlap with basque_parliament_1, with which we have trained our

|

|

| 403 |

|

| 404 |

**WER**

|

| 405 |

|

| 406 |

-

| SalamandraTAV |

|

| 407 |

-

|

| 408 |

-

| ast

|

| 409 |

-

| ca

|

| 410 |

-

| en

|

| 411 |

-

| es

|

| 412 |

-

| gl

|

| 413 |

-

| pt

|

| 414 |

-

|

| 415 |

-

| SeamlessM4T | |

|

| 416 |

-

|:--------------|----------:|

|

| 417 |

-

| ca | 7.89 |

|

| 418 |

-

| en | **9.15** |

|

| 419 |

-

| es | **5.64** |

|

| 420 |

-

| gl | **7.38** |

|

| 421 |

-

| pt | **19.62** |

|

| 422 |

|

| 423 |

</details>

|

| 424 |

|

|

@@ -429,22 +398,15 @@ Mintzai-ST has overlap with basque_parliament_1, with which we have trained our

|

|

| 429 |

|

| 430 |

**WER**

|

| 431 |

|

| 432 |

-

| SalamandraTAV |

|

| 433 |

-

|

| 434 |

-

| ast

|

| 435 |

-

| ca

|

| 436 |

-

| en

|

| 437 |

-

| es

|

| 438 |

-

| gl

|

| 439 |

-

| pt

|

| 440 |

-

|

| 441 |

-

| SeamlessM4T | |

|

| 442 |

-

|:--------------|----------:|

|

| 443 |

-

| ca | **5.74** |

|

| 444 |

-

| en | **7.66** |

|

| 445 |

-

| es | **5.30** |

|

| 446 |

-

| gl | **8.00** |

|

| 447 |

-

| pt | **7.94** |

|

| 448 |

|

| 449 |

</details>

|

| 450 |

|

|

@@ -455,15 +417,11 @@ Mintzai-ST has overlap with basque_parliament_1, with which we have trained our

|

|

| 455 |

|

| 456 |

**WER**

|

| 457 |

|

| 458 |

-

| SalamandraTAV |

|

| 459 |

-

|

| 460 |

-

| es

|

| 461 |

-

| eu

|

| 462 |

|

| 463 |

-

| SeamlessM4T | |

|

| 464 |

-

|:--------------|----------:|

|

| 465 |

-

| es | 9.24 |

|

| 466 |

-

| eu | 25.69 |

|

| 467 |

|

| 468 |

</details>

|

| 469 |

|

|

@@ -478,18 +436,12 @@ For further information, please send an email to <[email protected]>.

|

|

| 478 |

### Copyright

|

| 479 |

Copyright(c) 2025 by Language Technologies Lab, Barcelona Supercomputing Center.

|

| 480 |

|

| 481 |

-

[ ] Fede

|

| 482 |

-

|

| 483 |

### Funding

|

| 484 |

-

This work

|

| 485 |

-

|

| 486 |

-

[ ] Fede

|

| 487 |

|

| 488 |

### Acknowledgements

|

| 489 |

|

| 490 |

-

|

| 491 |

-

|

| 492 |

-

[ ] Fede

|

| 493 |

|

| 494 |

### Disclaimer

|

| 495 |

Be aware that the model may contain biases or other unintended distortions.

|

|

@@ -499,8 +451,6 @@ including those governing the use of Artificial Intelligence.

|

|

| 499 |

|

| 500 |

The Barcelona Supercomputing Center, as the owner and creator of the model, shall not be held liable for any outcomes resulting from third-party use.

|

| 501 |

|

| 502 |

-

[ ] Fede

|

| 503 |

-

|

| 504 |

### License

|

| 505 |

[Apache License, Version 2.0](https://www.apache.org/licenses/LICENSE-2.0)

|

| 506 |

|

|

@@ -510,11 +460,11 @@ The Barcelona Supercomputing Center, as the owner and creator of the model, shal

|

|

| 510 |

If you find our model useful, we would appreciate if you could cite our work as follows:

|

| 511 |

|

| 512 |

```

|

| 513 |

-

@misc{

|

| 514 |

-

|

| 515 |

-

|

| 516 |

-

|

| 517 |

-

|

| 518 |

-

|

| 519 |

}

|

| 520 |

```

|

|

|

|

| 15 |

- BSC-LT/salamandraTA-7b-instruct

|

| 16 |

---

|

| 17 |

|

|

|

|

| 18 |

<!--  -->

|

| 19 |

|

| 20 |

# SalamandraTAV-7b Model Card

|

|

|

|

| 58 |

|

| 59 |

### Training Framework

|

| 60 |

|

| 61 |

+

The code used to train SalamandraTAV-7b is based on the [Transformers](https://huggingface.co/docs/transformers/) library, and will be publicly available soon.

|

| 62 |

|

| 63 |

### Compute Infrastructure

|

| 64 |

|

|

|

|

| 83 |

The easiest way to use the model is using the custom pipeline `multimodal_mt`:

|

| 84 |

```python

|

| 85 |

from transformers import pipeline

|

|

|

|

| 86 |

pipe = pipeline(

|

| 87 |

task="multimodal_mt",

|

| 88 |

model="langtech-veu/salamandra-TAV-7b",

|

|

|

|

| 120 |

Run the S2TT pipeline, specifying the target language:

|

| 121 |

```python

|

| 122 |

transcription = pipe(audio_path, mode="asr", **generation_kwargs)

|

|

|

|

| 123 |

translation = pipe(transcription, mode="t2tt", tgt_lang="Spanish", **generation_kwargs)

|

| 124 |

```

|

| 125 |

|

| 126 |

Optionally, you can also specify the source language:

|

| 127 |

```python

|

| 128 |

transcription = pipe(audio_path, mode="asr", src_lang="English", **generation_kwargs)

|

|

|

|

| 129 |

translation = pipe(transcription, mode="t2tt", src_lang="English", tgt_lang="Spanish", **generation_kwargs)

|

| 130 |

```

|

| 131 |

|

|

|

|

| 136 |

Run the S2TT pipeline, specifying the target language:

|

| 137 |

```python

|

| 138 |

history = pipe(audio_path, return_chat_history=True, mode="asr", **generation_kwargs)

|

|

|

|

| 139 |

translation = pipe(history, mode="t2tt", tgt_lang="Spanish", **generation_kwargs)

|

| 140 |

```

|

| 141 |

|

| 142 |

Optionally, you can also specify the source language:

|

| 143 |

```python

|

| 144 |

history = pipe(audio_path, return_chat_history=True, mode="asr", src_lang="English", **generation_kwargs)

|

|

|

|

| 145 |

translation = pipe(history, mode="t2tt", src_lang="English", tgt_lang="Spanish", **generation_kwargs)

|

| 146 |

```

|

| 147 |

|

|

|

|

| 150 |

If you are interested in getting the intermediate results, you can do it as follows:

|

| 151 |

```python

|

| 152 |

history = pipe(audio_path, return_chat_history=True, mode="asr", **generation_kwargs)

|

| 153 |

+

transcription = history.get_assistant_messages()[-1]

|

| 154 |

history = pipe(history, return_chat_history=True, mode="t2tt", tgt_lang="Spanish", **generation_kwargs)

|

| 155 |

+

translation = history.get_assistant_messages()[-1]

|

| 156 |

+

history = pipe(history, return_chat_history=True, mode="lid", **generation_kwargs)

|

| 157 |

+

src_language = history.get_assistant_messages()[-1]

|

| 158 |

```

|

| 159 |

|

| 160 |

|

| 161 |

## Training Data

|

| 162 |

+

|

| 163 |

+

### Global Summary

|

| 164 |

+

|

| 165 |

+

| Data Type | Hours | Samples | Tokens (target) | Tokens (total) |

|

| 166 |

+

|:--------------|:----------|:-----------|:------------------|:---------------|

|

| 167 |

+

| **ASR** | 12,147.5h | 5,207,686 | 582,567,674 | 4,180,709,878 |

|

| 168 |

+

| **S2TT** | 896h | 556,664 | 28,297,402 | 153,376,912 |

|

| 169 |

+

| **T2TT** | - | 2,242,354 | 112,837,123 | 220,328,525 |

|

| 170 |

|

| 171 |

### Automatic Speech Recognition Data

|

| 172 |

| Dataset | ast | ca | en | es | eu | gl | oc | pt | Total |

|

|

|

|

| 185 |

| Total (hours) | 0.5h |2010.5h|6729.5h|2497.5h| 544h | 181h | 0.5h | 184h |12147.5h|

|

| 186 |

|

| 187 |

|

|

|

|

|

|

|

| 188 |

### Speech-To-Text Translation

|

| 189 |

|

| 190 |

+

| Dataset | ca-en | en-ca | en-es | en-pt | es-en | es-pt | pt-en | pt-es | Total |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 191 |

|:----------------------------------------------------------------------------------------------------|:------|:------|:------|:------|:------|:------|:------|:------|:------|

|

| 192 |

| [CoVoST 2](https://github.com/facebookresearch/covost/) (train) | 135.5h| 430h | | | 113h | | 10.5h | | 689h |

|

| 193 |

| [Europarl-ST v1.1](https://www.mllp.upv.es/europarl-st/) (train) | | | 75.5h | 74h | 20.5h | 12.5h | 14.5h | 9.5h | 207h |

|

| 194 |

+

| Total (hours) | 135.5h| 430h | 75.5h | 74h | 133.5h| 12.5h | 25h | 9.5h | 896h |

|

|

|

|

| 195 |

|

|

|

|

| 196 |

|

| 197 |

### Text-To-Text Translation

|

| 198 |

|

| 199 |

+

For T2TT data, we filtered [Wikimedia](https://dumps.wikimedia.org/other/contenttranslation/20250801/) and [Tatoeba](https://downloads.tatoeba.org/exports/per_language/) datasets using the following criteria:

|

| 200 |

+

1. Sample obtained a [GlotLID v3](https://github.com/cisnlp/GlotLID) target language probability of at least 50%

|

| 201 |

+

2. Sample contains between 5 and 100 words

|

| 202 |

+

3. Sample obtained a [BLASER 2.0](https://huggingface.co/facebook/blaser-2.0-qe) score higher than 3.75

|

| 203 |

+

|

| 204 |

+

Obtaining 102,845,818 target tokens for Wikimedia and 9,991,305 target tokens for Tatoeba, with the following language distribution:

|

| 205 |

+

|

| 206 |

+

| Language | As Source (samples) | (%) | As Target (samples) | (%) |

|

| 207 |

+

|:---------|--------------------:|:--------------|--------------------:|:--------------|

|

| 208 |

+

| **ast** | 848 | 0.0% | 11,800 | 0.5% |

|

| 209 |

+

| **ca** | 44,278 | 2.0% | 272,250 | 12.1% |

|

| 210 |

+

| **en** | 1,353,784 | 60.4% | 412,166 | 18.4% |

|

| 211 |

+

| **es** | 533,330 | 23.8% | 862,276 | 38.5% |

|

| 212 |

+

| **eu** | 5,266 | 0.2% | 79,534 | 3.5% |

|

| 213 |

+

| **gl** | 14,052 | 0.6% | 85,510 | 3.8% |

|

| 214 |

+

| **pt** | 290,754 | 13.0% | 518,776 | 23.1% |

|

| 215 |

+

| **Total**| 2,242,354 | 100.0% | 2,242,354 | 100.0% |

|

| 216 |

|

|

|

|

| 217 |

|

| 218 |

|

| 219 |

|

|

|

|

| 270 |

|

| 271 |

| SalamandraTAV | ast | ca | en | es | gl | pt | Avg (tgt) |

|

| 272 |

|:--------------|-----------:|----------:|----------:|----------:|-----------:|-------------:|----------:|

|

| 273 |

+

| **ast** | - | **26.4** | **27.7** | **17.1** | **19.7** | **21.6** | **22.5** |

|

| 274 |

+

| **ca** | **22.9** | - | 39.7 | **22.2** | **29.8** | **31.0** | **29.1** |

|

| 275 |

+

| **en** | **24.3** | **39.1** | - | **25.5** | **32.3** | **43.0** | **32.8** |

|

| 276 |

+

| **es** | **13.7** | **20.8** | 24.8 | - | **18.8** | **19.1** | **19.4** |

|

| 277 |

+

| **gl** | **18.1** | **29.4** | 32.4 | **20.6** | - | **26.0** | **25.3** |

|

| 278 |

+

| **pt** | **19.9** | **30.6** | 37.0 | **20.6** | **25.6** | - | **26.7** |

|

| 279 |

+

| **Avg (src)** | **19.8** | **29.3** | 32.3 | **21.2** | **25.2** | **28.1** | **26.0** |

|

| 280 |

+

|

| 281 |

+

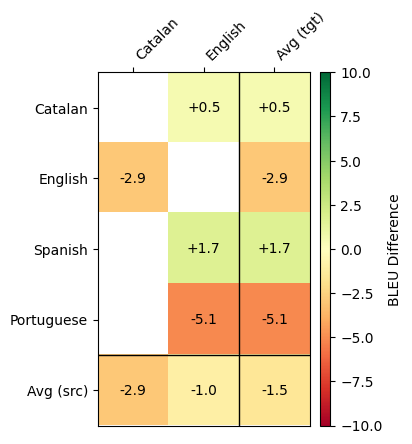

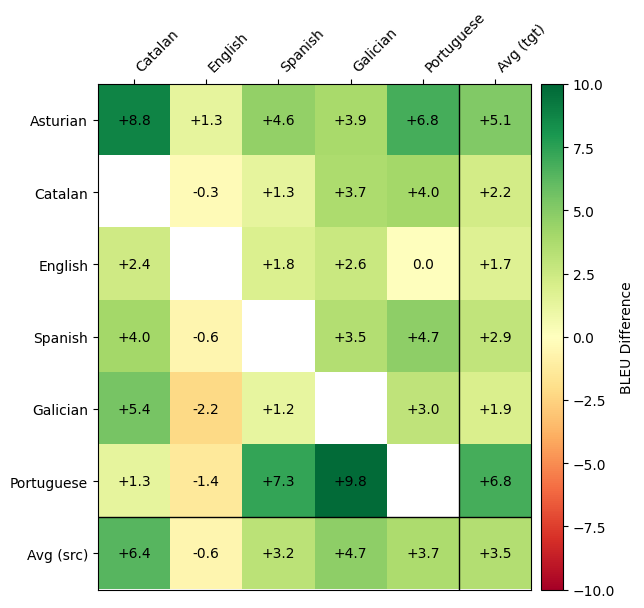

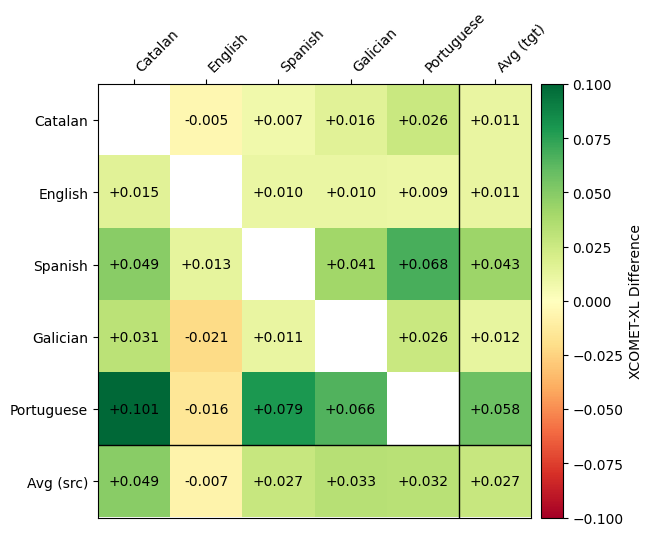

**SalamandraTAV vs SeamlessM4T BLEU Difference**

|

| 282 |

+

|

| 283 |

+

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 284 |

|

| 285 |

The evaluation results reported here were obtained by manually running inference with the model on the test set. We verified that the results from SeamlessM4T are consistent with the [official results](https://huggingface.co/facebook/seamless-m4t-v2-large) reported by the authors.

|

| 286 |

|

|

|

|

| 288 |

**XCOMET-XL**

|

| 289 |

|

| 290 |

|

| 291 |

+

| SalamandraTAV | ca | en | es | gl | pt | Avg (tgt) |

|

| 292 |

+

|:---------------|-----------:|-----------:|------------:|------------:|------------:|-----------:|

|

| 293 |

+

| **ca** | - | 0.9289 | **0.9114** | **0.9202** | **0.9136** | **0.9185** |

|

| 294 |

+

| **en** | **0.8987** | - | **0.9045** | **0.8981** | **0.9077** | **0.9023** |

|

| 295 |

+

| **es** | **0.9013** | **0.9257** | - | **0.9162** | **0.9110** | **0.9136** |

|

| 296 |

+

| **gl** | **0.8930** | 0.9024 | **0.8890** | - | **0.9005** | **0.8962** |

|

| 297 |

+

| **pt** | **0.8697** | 0.8912 | **0.8775** | **0.8862** | - | **0.8812** |

|

| 298 |

+

| **Avg (src)** | **0.8907** | 0.9121 | **0.8956** | **0.9052** | **0.9082** | **0.9023** |

|

| 299 |

+

|

| 300 |

+

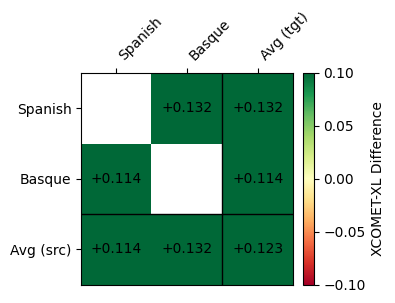

**SalamandraTAV vs SeamlessM4T XCOMET-XL Difference**

|

| 301 |

+

|

| 302 |

+

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 303 |

|

| 304 |

XCOMET-XL results for Asturian are not reported because it is not supported by this metric.

|

| 305 |

|

|

|

|

| 314 |

|

| 315 |

| SalamandraTAV | ca | en |

|

| 316 |

|:--------------|----------:|----------:|

|

| 317 |

+

| **ca** | - | **37.3** |

|

| 318 |

| **en** | 41.1 | - |

|

| 319 |

| **es** | - | **43.9** |

|

| 320 |

+

| **pt** | - | 49.0 |

|

| 321 |

+

| **Avg (src)** | 41.1 | 43.4 |

|

| 322 |

|

| 323 |

+

**SalamandraTAV vs SeamlessM4T BLEU Difference**

|

| 324 |

+

|

| 325 |

+

|

|

|

|

|

|

|

|

|

|

|

|

|

| 326 |

|

| 327 |

**XCOMET-XL**

|

| 328 |

|

| 329 |

| SalamandraTAV | ca | en |

|

| 330 |

|:--------------|----------:|----------:|

|

| 331 |

+

| **ca** | - | 0.8835 |

|

| 332 |

+

| **en** | 0.8454 | - |

|

| 333 |

| **es** | - | 0.9241 |

|

| 334 |

+

| **pt** | - | 0.8967 |

|

| 335 |

+

| **Avg (src)** | 0.8454 | 0.9014 |

|

| 336 |

+

|

| 337 |

+

**SalamandraTAV vs SeamlessM4T XCOMET-XL Difference**

|

| 338 |

|

| 339 |

+

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 340 |

|

| 341 |

</details>

|

| 342 |

|

|

|

|

| 351 |

|

| 352 |

| SalamandraTAV | es | eu |

|

| 353 |

|:--------------|----------:|----------:|

|

| 354 |

+

| **es** | - | **21.7** |

|

| 355 |

+

| **eu** | **26.8** | - |

|

| 356 |

|

| 357 |

+

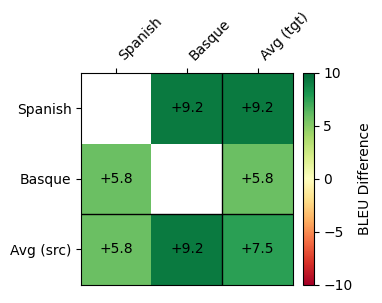

**SalamandraTAV vs SeamlessM4T BLEU Difference**

|

| 358 |

+

|

| 359 |

+

|

|

|

|

| 360 |

|

| 361 |

**XCOMET-XL**

|

| 362 |

|

| 363 |

| SalamandraTAV | es | eu |

|

| 364 |

|:--------------|----------:|----------:|

|

| 365 |

+

| **es** | - | **0.8000**|

|

| 366 |

+

| **eu** | **0.8329**| - |

|

| 367 |

|

| 368 |

+

**SalamandraTAV vs SeamlessM4T XCOMET-XL Difference**

|

| 369 |

+

|

| 370 |

+

|

|

|

|

| 371 |

|

| 372 |

</details>

|

| 373 |

|

|

|

|

| 380 |

|

| 381 |

**WER**

|

| 382 |

|

| 383 |

+

| | SalamandraTAV | SeamlessM4T | Whisper v3 | Spire |

|

| 384 |

+

|:--------------|--------------:|------------:|------------:|------:|

|

| 385 |

+

| **ast** | **29.35** | - | - | - |

|

| 386 |

+

| **ca** | **7.34** | 7.89 | 14.11 | - |

|

| 387 |

+

| **en** | 16.65 | **9.15** | 11.13 | 22.56 |

|

| 388 |

+

| **es** | 7.72 | 5.64 | **5.21** | - |

|

| 389 |

+

| **gl** | 7.83 | **7.38** | 14.50 | - |

|

| 390 |

+

| **pt** | 21.80 | 19.62 | **6.85** | - |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 391 |

|

| 392 |

</details>

|

| 393 |

|

|

|

|

| 398 |

|

| 399 |

**WER**

|

| 400 |

|

| 401 |

+

| | SalamandraTAV | SeamlessM4T | Whisper v3 | Spire |

|

| 402 |

+

|:--------------|--------------:|------------:|------------:|------:|

|

| 403 |

+

| **ast** | **25.68** | - | - | - |

|

| 404 |

+

| **ca** | 7.34 | 5.74 | **4.88** | - |

|

| 405 |

+

| **en** | 8.35 | 7.66 | **4.81** | 9.07 |

|

| 406 |

+

| **es** | 6.04 | 5.30 | **2.95** | - |

|

| 407 |

+

| **gl** | 11.83 | **8.00** | 13.61 | - |

|

| 408 |

+

| **pt** | 10.55 | 7.94 | **3.97** | - |

|

| 409 |

+

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 410 |

|

| 411 |

</details>

|

| 412 |

|

|

|

|

| 417 |

|

| 418 |

**WER**

|

| 419 |

|

| 420 |

+

| | SalamandraTAV | SeamlessM4T | Whisper v3 |

|

| 421 |

+

|:--------------|--------------:|------------:|------------:|

|

| 422 |

+

| **es** | 8.21 | 9.24 | **7.37** |

|

| 423 |

+

| **eu** | **19.34** | 25.69 | 48.64 |

|

| 424 |

|

|

|

|

|

|

|

|

|

|

|

|

|

| 425 |

|

| 426 |

</details>

|

| 427 |

|

|

|

|

| 436 |

### Copyright

|

| 437 |

Copyright(c) 2025 by Language Technologies Lab, Barcelona Supercomputing Center.

|

| 438 |

|

|

|

|

|

|

|

| 439 |

### Funding

|

| 440 |

+

This work is funded by the Ministerio para la Transformación Digital y de la Función Pública - Funded by EU – NextGenerationEU within the framework of the project Modelos del Lenguaje.

|

|

|

|

|

|

|

| 441 |

|

| 442 |

### Acknowledgements

|

| 443 |

|

| 444 |

+

The author thankfully acknowledges the computer resources at MareNostrum and the technical support provided by Barcelona Supercomputing Center (RES-IM-2025-2-0027).

|

|

|

|

|

|

|

| 445 |

|

| 446 |

### Disclaimer

|

| 447 |

Be aware that the model may contain biases or other unintended distortions.

|

|

|

|

| 451 |

|

| 452 |

The Barcelona Supercomputing Center, as the owner and creator of the model, shall not be held liable for any outcomes resulting from third-party use.

|

| 453 |

|

|

|

|

|

|

|

| 454 |

### License

|

| 455 |

[Apache License, Version 2.0](https://www.apache.org/licenses/LICENSE-2.0)

|

| 456 |

|

|

|

|

| 460 |

If you find our model useful, we would appreciate if you could cite our work as follows:

|

| 461 |

|

| 462 |

```

|

| 463 |

+

@misc{bsclt2025salamandraTAV7b ,

|

| 464 |

+

title={salamandra-TAV-7b: a Speech-To-Text Translation model based on an end-to-end Speech LLM for Iberian Languages.},

|

| 465 |

+

author={…; España-Bonet, Cristina},

|

| 466 |

+

organization={Barcelona Supercomputing Center},

|

| 467 |

+

url={https://huggingface.co/langtech-veu/salamandra-TAV-7b},

|

| 468 |

+

year={2025}

|

| 469 |

}

|

| 470 |

```

|

images/s2tt-covost-bleu.png

ADDED

|

images/s2tt-covost-xcomet.png

ADDED

|

images/s2tt-fleurs-bleu.png

ADDED

|

images/s2tt-fleurs-xcomet.png

ADDED

|

images/s2tt-mintzai-bleu.png

ADDED

|

images/s2tt-mintzai-xcomet.png

ADDED

|