Add README and supporting files for Nemotron Nano 12B v2 GGUF Q4_K_M

Browse files- .gitattributes +1 -0

- README.md +128 -3

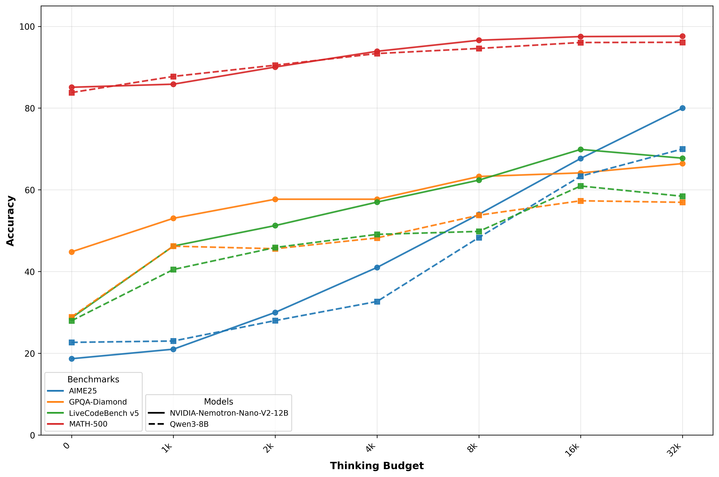

- acc-vs-budget.png +0 -0

- bias.md +10 -0

- config.json +57 -0

- configuration_nemotron_h.py +245 -0

- explainability.md +14 -0

- generation_config.json +11 -0

- modeling_nemotron_h.py +1638 -0

- nemotron_toolcall_parser_no_streaming.py +110 -0

- privacy.md +13 -0

- safety.md +9 -0

- special_tokens_map.json +23 -0

- tokenizer.json +3 -0

- tokenizer_config.json +0 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,4 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

tokenizer.json filter=lfs diff=lfs merge=lfs -text

|

README.md

CHANGED

|

@@ -1,3 +1,128 @@

|

|

| 1 |

-

---

|

| 2 |

-

license:

|

| 3 |

-

---

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: other

|

| 3 |

+

base_model: nvidia/NVIDIA-Nemotron-Nano-12B-v2

|

| 4 |

+

library_name: llama.cpp

|

| 5 |

+

tags:

|

| 6 |

+

- gguf

|

| 7 |

+

- quantized

|

| 8 |

+

- 4-bit

|

| 9 |

+

- Q4_K_M

|

| 10 |

+

- nemotron

|

| 11 |

+

- 12B

|

| 12 |

+

- tool-calling

|

| 13 |

+

- thinking

|

| 14 |

+

- 128k

|

| 15 |

+

- multilingual

|

| 16 |

+

- llama.cpp

|

| 17 |

+

- ollama

|

| 18 |

+

---

|

| 19 |

+

|

| 20 |

+

# NVIDIA Nemotron Nano 12B v2 - GGUF Q4_K_M (7GB)

|

| 21 |

+

|

| 22 |

+

This repository provides a 4-bit quantized GGUF build of NVIDIA Nemotron Nano 12B v2 using Q4_K_M, reducing the on-disk size to approximately 7GB from roughly 23GB for the original full precision weights, while preserving core capabilities.

|

| 23 |

+

|

| 24 |

+

**Upstream base model:** [nvidia/NVIDIA-Nemotron-Nano-12B-v2](https://huggingface.co/nvidia/NVIDIA-Nemotron-Nano-12B-v2)

|

| 25 |

+

|

| 26 |

+

**SHA256:** `82ea4805d2f9f37e3c67b06768141ff58e43fb0dcd3983a82e9c2f481eb7fea8`

|

| 27 |

+

|

| 28 |

+

## What's included

|

| 29 |

+

|

| 30 |

+

- `model-q4.gguf` (7.0GB)

|

| 31 |

+

- `tokenizer.json`

|

| 32 |

+

- `tokenizer_config.json`

|

| 33 |

+

- `special_tokens_map.json`

|

| 34 |

+

- `config.json`

|

| 35 |

+

- `generation_config.json`

|

| 36 |

+

- `configuration_nemotron_h.py`

|

| 37 |

+

- `modeling_nemotron_h.py`

|

| 38 |

+

- `nemotron_toolcall_parser_no_streaming.py`

|

| 39 |

+

- `bias.md`, `explainability.md`, `privacy.md`, `safety.md`

|

| 40 |

+

- `acc-vs-budget.png`

|

| 41 |

+

- `README.md`

|

| 42 |

+

|

| 43 |

+

## Capabilities

|

| 44 |

+

|

| 45 |

+

- ✓ Tool calling support via preserved special tokens and helper parser script

|

| 46 |

+

- ✓ Thinking mode tokens for structured reasoning

|

| 47 |

+

- ✓ Long-context up to 128k window

|

| 48 |

+

- ✓ Multilingual general-purpose LLM behavior

|

| 49 |

+

|

| 50 |

+

**Note:** GGUF inference backends may vary in their native support for tool-calling integrations; use the included parser or your own orchestration as needed.

|

| 51 |

+

|

| 52 |

+

## Hardware notes

|

| 53 |

+

|

| 54 |

+

- **Disk space:** 8GB free recommended for the quantized file and metadata

|

| 55 |

+

- **CPU inference:** 16GB RAM recommended for 4k contexts; 32GB suggested for comfortable operation. For 128k contexts, memory usage grows significantly and 64 to 128GB system RAM may be required

|

| 56 |

+

- **GPU offload:** 8 to 16GB VRAM can accelerate decoding with llama.cpp `-ngl` offloading; very long contexts may require 24 to 48GB VRAM or hybrid CPU plus GPU offload

|

| 57 |

+

- **Throughput:** Depends on backend, threads, and offload settings

|

| 58 |

+

|

| 59 |

+

## Usage

|

| 60 |

+

|

| 61 |

+

### llama.cpp

|

| 62 |

+

|

| 63 |

+

Build llama.cpp, then run:

|

| 64 |

+

|

| 65 |

+

**Generate:**

|

| 66 |

+

```bash

|

| 67 |

+

./llama-cli -m model-q4.gguf -p "Hello, Nemotron." -n 128 -t 8 -c 4096 -ngl 35

|

| 68 |

+

```

|

| 69 |

+

|

| 70 |

+

**Server:**

|

| 71 |

+

```bash

|

| 72 |

+

./llama-server -m model-q4.gguf -c 4096 -ngl 35

|

| 73 |

+

```

|

| 74 |

+

|

| 75 |

+

For very long contexts, increase `-c` accordingly and ensure sufficient RAM or VRAM for KV cache.

|

| 76 |

+

|

| 77 |

+

### Python via llama-cpp-python

|

| 78 |

+

|

| 79 |

+

```bash

|

| 80 |

+

pip install llama-cpp-python

|

| 81 |

+

```

|

| 82 |

+

|

| 83 |

+

```python

|

| 84 |

+

from llama_cpp import Llama

|

| 85 |

+

llm = Llama(model_path="model-q4.gguf", n_ctx=4096, n_threads=8)

|

| 86 |

+

out = llm("Write a short greeting.", max_tokens=128)

|

| 87 |

+

print(out)

|

| 88 |

+

```

|

| 89 |

+

|

| 90 |

+

### Ollama

|

| 91 |

+

|

| 92 |

+

Create a Modelfile referencing this repo, then create and run:

|

| 93 |

+

|

| 94 |

+

**Modelfile:**

|

| 95 |

+

```

|

| 96 |

+

FROM hf.co/Avarok/nvidia-nemotron-nano-12b-v2-q4_k_m

|

| 97 |

+

PARAMETER num_ctx 4096

|

| 98 |

+

```

|

| 99 |

+

|

| 100 |

+

**Commands:**

|

| 101 |

+

```bash

|

| 102 |

+

ollama create nemotron-nano-12b-q4km -f Modelfile

|

| 103 |

+

ollama run nemotron-nano-12b-q4km

|

| 104 |

+

```

|

| 105 |

+

|

| 106 |

+

**Note:** Ollama versions and syntax may evolve; consult Ollama docs if the Modelfile interface changes.

|

| 107 |

+

|

| 108 |

+

## License and attribution

|

| 109 |

+

|

| 110 |

+

- **Base model:** NVIDIA Nemotron Nano 12B v2

|

| 111 |

+

- **License:** This GGUF quantized derivative is subject to the original model's license and terms. See the [upstream model card](https://huggingface.co/nvidia/NVIDIA-Nemotron-Nano-12B-v2) and license. By using this repository you agree to comply with NVIDIA's licensing for Nemotron models

|

| 112 |

+

- **Attribution:** If you use this model, please attribute both NVIDIA for the base model and this repository for the quantized packaging

|

| 113 |

+

|

| 114 |

+

## Reproducibility

|

| 115 |

+

|

| 116 |

+

This artifact was produced by converting the upstream weights to GGUF and quantizing with Q4_K_M. An equivalent quantization command with llama.cpp tools is:

|

| 117 |

+

|

| 118 |

+

```bash

|

| 119 |

+

llama-quantize input.gguf model-q4.gguf Q4_K_M

|

| 120 |

+

```

|

| 121 |

+

|

| 122 |

+

Exact commands may differ based on the conversion workflow for the upstream model version.

|

| 123 |

+

|

| 124 |

+

## Safety

|

| 125 |

+

|

| 126 |

+

Review the included bias, privacy, and safety documents. As with all LLMs, outputs may be inaccurate or unsafe without proper safeguards and human oversight.

|

| 127 |

+

|

| 128 |

+

|

acc-vs-budget.png

ADDED

|

bias.md

ADDED

|

@@ -0,0 +1,10 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

| Field | Response |

|

| 2 |

+

| :---- | :---- |

|

| 3 |

+

| Participation considerations from adversely impacted groups [protected classes](https://www.senate.ca.gov/content/protected-classes) in model design and testing: | None |

|

| 4 |

+

| Bias Metric (If Measured): | [BBQ Accuracy Scores in Ambiguous Contexts](https://github.com/nyu-mll/BBQ/) |

|

| 5 |

+

| Which characteristic (feature) show(s) the greatest difference in performance?: | The model shows high variance in the characteristics when it is used with a high temperature. |

|

| 6 |

+

| Which feature(s) have the worst performance overall? | Age |

|

| 7 |

+

| Measures taken to mitigate against unwanted bias: | None |

|

| 8 |

+

| If using internal data, description of methods implemented in data acquisition or processing, if any, to address the prevalence of identifiable biases in the training, testing, and validation data: | The training datasets contain a large amount of synthetic data generated by LLMs. We manually curated prompts. |

|

| 9 |

+

| Tools used to assess statistical imbalances and highlight patterns that may introduce bias into AI models: | [BBQ](https://github.com/nyu-mll/BBQ/) |

|

| 10 |

+

| Tools used to assess statistical imbalances and highlight patterns that may introduce bias into AI models: | These datasets, such as Common Crawl, CC-News, and Wikimedia, do not collectively or exhaustively represent all demographic groups (and proportionally therein). For instance, these datasets do not contain explicit mentions of demographic classes such as age, gender, or ethnicity in over 85% of samples. In the subset where such terms are present, Common Crawl and CC-News contain notable representational skews—for example, references to "male" significantly outnumber those to "female," and mentions of "White" are the most frequent among ethnic identifiers. To mitigate these imbalances, we recommend considering evaluation techniques such as bias audits, fine-tuning with demographically balanced datasets, and mitigation strategies like counterfactual data augmentation to align with the desired model behavior. This evaluation used a 3,000-sample subset per dataset, identified as the optimal threshold for maximizing embedder accuracy, and includes outputs from uncalibrated embedders; as such, certain limitations may exist in the reliability of the embedding. |

|

config.json

ADDED

|

@@ -0,0 +1,57 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"architectures": [

|

| 3 |

+

"NemotronHForCausalLM"

|

| 4 |

+

],

|

| 5 |

+

"attention_bias": false,

|

| 6 |

+

"attention_dropout": 0.0,

|

| 7 |

+

"head_dim": 128,

|

| 8 |

+

"auto_map": {

|

| 9 |

+

"AutoConfig": "configuration_nemotron_h.NemotronHConfig",

|

| 10 |

+

"AutoModelForCausalLM": "modeling_nemotron_h.NemotronHForCausalLM"

|

| 11 |

+

},

|

| 12 |

+

"bos_token_id": 1,

|

| 13 |

+

"chunk_size": 128,

|

| 14 |

+

"conv_kernel": 4,

|

| 15 |

+

"eos_token_id": 12,

|

| 16 |

+

"hidden_dropout": 0.0,

|

| 17 |

+

"hidden_size": 5120,

|

| 18 |

+

"hybrid_override_pattern": "M-M-M-M*-M-M-M-M*-M-M-M-M*-M-M-M-M*-M-M-M-M*-M-M-M-M*-M-M-M-M-",

|

| 19 |

+

"initializer_range": 0.02,

|

| 20 |

+

"intermediate_size": 20480,

|

| 21 |

+

"layer_norm_epsilon": 1e-05,

|

| 22 |

+

"mamba_head_dim": 80,

|

| 23 |

+

"mamba_hidden_act": "silu",

|

| 24 |

+

"mamba_num_heads": 128,

|

| 25 |

+

"mamba_proj_bias": false,

|

| 26 |

+

"max_position_embeddings": 131072,

|

| 27 |

+

"mlp_bias": false,

|

| 28 |

+

"mlp_hidden_act": "relu2",

|

| 29 |

+

"model_type": "nemotron_h",

|

| 30 |

+

"n_groups": 8,

|

| 31 |

+

"num_attention_heads": 40,

|

| 32 |

+

"num_hidden_layers": 62,

|

| 33 |

+

"num_key_value_heads": 8,

|

| 34 |

+

"num_logits_to_keep": 1,

|

| 35 |

+

"pad_token_id": 0,

|

| 36 |

+

"rescale_prenorm_residual": true,

|

| 37 |

+

"residual_in_fp32": false,

|

| 38 |

+

"rms_norm_eps": 1e-05,

|

| 39 |

+

"sliding_window": null,

|

| 40 |

+

"ssm_state_size": 128,

|

| 41 |

+

"tie_word_embeddings": false,

|

| 42 |

+

"time_step_floor": 0.0001,

|

| 43 |

+

"time_step_limit": [

|

| 44 |

+

0.0,

|

| 45 |

+

Infinity

|

| 46 |

+

],

|

| 47 |

+

"time_step_max": 0.1,

|

| 48 |

+

"time_step_min": 0.001,

|

| 49 |

+

"time_step_rank": 256,

|

| 50 |

+

"torch_dtype": "bfloat16",

|

| 51 |

+

"transformers_version": "4.51.3",

|

| 52 |

+

"use_bias": false,

|

| 53 |

+

"use_cache": true,

|

| 54 |

+

"use_conv_bias": true,

|

| 55 |

+

"use_mamba_kernels": true,

|

| 56 |

+

"vocab_size": 131072

|

| 57 |

+

}

|

configuration_nemotron_h.py

ADDED

|

@@ -0,0 +1,245 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# coding=utf-8

|

| 2 |

+

# Copyright 2024 AI21 Labs Ltd. and the HuggingFace Inc. team. All rights reserved.

|

| 3 |

+

# Copyright (c) 2025, NVIDIA CORPORATION. All rights reserved.

|

| 4 |

+

#

|

| 5 |

+

# Licensed under the Apache License, Version 2.0 (the "License");

|

| 6 |

+

# you may not use this file except in compliance with the License.

|

| 7 |

+

# You may obtain a copy of the License at

|

| 8 |

+

#

|

| 9 |

+

# http://www.apache.org/licenses/LICENSE-2.0

|

| 10 |

+

#

|

| 11 |

+

# Unless required by applicable law or agreed to in writing, software

|

| 12 |

+

# distributed under the License is distributed on an "AS IS" BASIS,

|

| 13 |

+

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 14 |

+

# See the License for the specific language governing permissions and

|

| 15 |

+

# limitations under the License.

|

| 16 |

+

"""NemotronH model configuration"""

|

| 17 |

+

|

| 18 |

+

import re

|

| 19 |

+

|

| 20 |

+

from transformers.configuration_utils import PretrainedConfig

|

| 21 |

+

from transformers.utils import logging

|

| 22 |

+

|

| 23 |

+

|

| 24 |

+

logger = logging.get_logger(__name__)

|

| 25 |

+

|

| 26 |

+

|

| 27 |

+

class NemotronHConfig(PretrainedConfig):

|

| 28 |

+

r"""

|

| 29 |

+

This is the configuration class to store the configuration of a [`NemotronHModel`]. It is used to instantiate a

|

| 30 |

+

NemotronH model according to the specified arguments, defining the model architecture. Instantiating a configuration

|

| 31 |

+

with the defaults will yield a similar configuration to that of the NemotronH-v0.1 model.

|

| 32 |

+

|

| 33 |

+

[todo](todo)

|

| 34 |

+

|

| 35 |

+

Configuration objects inherit from [`PretrainedConfig`] and can be used to control the model outputs. Read the

|

| 36 |

+

documentation from [`PretrainedConfig`] for more information.

|

| 37 |

+

|

| 38 |

+

|

| 39 |

+

Args:

|

| 40 |

+

vocab_size (`int`, *optional*, defaults to 131072):

|

| 41 |

+

Vocabulary size of the NemotronH model. Defines the number of different tokens that can be represented by the

|

| 42 |

+

`inputs_ids` passed when calling [`NemotronHModel`]

|

| 43 |

+

tie_word_embeddings (`bool`, *optional*, defaults to `False`):

|

| 44 |

+

Whether the model's input and output word embeddings should be tied. Note that this is only relevant if the

|

| 45 |

+

model has a output word embedding layer.

|

| 46 |

+

hidden_size (`int`, *optional*, defaults to 4096):

|

| 47 |

+

Dimension of the hidden representations.

|

| 48 |

+

intermediate_size (`int`, *optional*, defaults to 21504):

|

| 49 |

+

Dimension of the MLP representations.

|

| 50 |

+

num_hidden_layers (`int`, *optional*, defaults to 52):

|

| 51 |

+

Number of hidden layers in the Transformer encoder.

|

| 52 |

+

hybrid_override_pattern (`str`, *optional*, defaults to `"M-M-M-M*-M-M-M-M-M*-M-M-M-M-M*-M-M-M-M-M*-M-M-M-M-M-"`):

|

| 53 |

+

The pattern of the hybrid model. The pattern is a string of characters where each character represents M: Mamba2, *: Attention, -: MLP

|

| 54 |

+

num_attention_heads (`int`, *optional*, defaults to 32):

|

| 55 |

+

Number of attention heads for each attention layer in the Transformer encoder.

|

| 56 |

+

attention_head_dim (`int`, *optional*, defaults to 128):

|

| 57 |

+

Dimension of each attention head.

|

| 58 |

+

num_key_value_heads (`int`, *optional*, defaults to 8):

|

| 59 |

+

This is the number of key_value heads that should be used to implement Grouped Query Attention. If

|

| 60 |

+

`num_key_value_heads=num_attention_heads`, the model will use Multi Head Attention (MHA), if

|

| 61 |

+

`num_key_value_heads=1` the model will use Multi Query Attention (MQA) otherwise GQA is used.

|

| 62 |

+

mlp_hidden_act (`str`, *optional*, defaults to "relu2"):

|

| 63 |

+

The non-linear activation function in the MLP layers.

|

| 64 |

+

attention_bias (`bool`, *optional*, defaults to `False`):

|

| 65 |

+

Whether to use bias in attention layers.

|

| 66 |

+

mlp_bias (`bool`, *optional*, defaults to `False`):

|

| 67 |

+

Whether to use bias in MLP layers.

|

| 68 |

+

use_bias (`bool`, *optional*, defaults to `False`):

|

| 69 |

+

Whether to use bias in the model.

|

| 70 |

+

initializer_range (`float`, *optional*, defaults to 0.02):

|

| 71 |

+

The standard deviation of the truncated_normal_initializer for initializing all weight matrices.

|

| 72 |

+

layer_norm_epsilon (`float`, *optional*, defaults to 1e-5):

|

| 73 |

+

The epsilon used by the layer normalization layers.

|

| 74 |

+

residual_in_fp32 (`bool`, *optional*, defaults to `False`):

|

| 75 |

+

Whether or not residuals should be in `float32`. If set to `False` residuals will keep the same `dtype` as the rest of the model.

|

| 76 |

+

use_cache (`bool`, *optional*, defaults to `True`):

|

| 77 |

+

Whether or not the model should return the last key/values attentions (not used by all models). Only

|

| 78 |

+

relevant if `config.is_decoder=True`.

|

| 79 |

+

num_logits_to_keep (`int` or `None`, *optional*, defaults to 1):

|

| 80 |

+

Number of prompt logits to calculate during generation. If `None`, all logits will be calculated. If an

|

| 81 |

+

integer value, only last `num_logits_to_keep` logits will be calculated.

|

| 82 |

+

pad_token_id (`int`, *optional*, defaults to 0):

|

| 83 |

+

The id of the padding token.

|

| 84 |

+

bos_token_id (`int`, *optional*, defaults to 1):

|

| 85 |

+

The id of the "beginning-of-sequence" token.

|

| 86 |

+

eos_token_id (`int`, *optional*, defaults to 2):

|

| 87 |

+

The id of the "end-of-sequence" token.

|

| 88 |

+

sliding_window (`int`, *optional*, defaults to None):

|

| 89 |

+

Sliding window attention window size.

|

| 90 |

+

max_position_embeddings (`int`, *optional*, defaults to 4096):

|

| 91 |

+

The maximum sequence length that this model might ever be used with.

|

| 92 |

+

attention_dropout (`float`, *optional*, defaults to 0.0):

|

| 93 |

+

The dropout ratio for the attention probabilities.

|

| 94 |

+

hidden_dropout (`float`, *optional*, defaults to 0.0):

|

| 95 |

+

The dropout ratio for the hidden states.

|

| 96 |

+

use_mamba_kernels (`bool`, *optional*, defaults to `True`):

|

| 97 |

+

Flag indicating whether or not to use the fast mamba kernels. These are available only if `mamba-ssm` and

|

| 98 |

+

`causal-conv1d` are installed, and the mamba modules are running on a CUDA device.

|

| 99 |

+

ssm_state_size (`int`, *optional*, defaults to 128):

|

| 100 |

+

The dimension of the mamba state space latents.

|

| 101 |

+

mamba_num_heads (`int`, *optional*, defaults to 128):

|

| 102 |

+

Number of heads in Mamba layers.

|

| 103 |

+

mamba_n_groups (`int`, *optional*, defaults to 8):

|

| 104 |

+

Number of groups in Mamba layers.

|

| 105 |

+

mamba_head_dim (`int`, *optional*, defaults to 64):

|

| 106 |

+

Dimension of each Mamba head.

|

| 107 |

+

mamba_d_conv (`int`, *optional*, defaults to 4):

|

| 108 |

+

The size of the mamba convolution kernel.

|

| 109 |

+

mamba_expand (`int`, *optional*, defaults to 2):

|

| 110 |

+

Expanding factor used to determine the mamba intermediate size.

|

| 111 |

+

mamba_hidden_act (`str`, *optional*, defaults to "silu"):

|

| 112 |

+

The non-linear activation function in the Mamba layers.

|

| 113 |

+

mamba_dt_min (`float`, *optional*, defaults to 0.001):

|

| 114 |

+

Minimum value for the time step in Mamba.

|

| 115 |

+

mamba_dt_max (`float`, *optional*, defaults to 0.1):

|

| 116 |

+

Maximum value for the time step in Mamba.

|

| 117 |

+

mamba_dt_limit (`tuple`, *optional*, defaults to (0.0, float("inf"))):

|

| 118 |

+

Limits for the time step in Mamba.

|

| 119 |

+

mamba_dt_init_floor (`float`, *optional*, defaults to 1e-4):

|

| 120 |

+

Floor value for time step initialization in Mamba.

|

| 121 |

+

mamba_conv_bias (`bool`, *optional*, defaults to `True`):

|

| 122 |

+

Whether to use bias in the convolution layer of the mamba mixer block.

|

| 123 |

+

mamba_proj_bias (`bool`, *optional*, defaults to `False`):

|

| 124 |

+

Whether to use bias in the input and output projections of the mamba mixer block.

|

| 125 |

+

mamba_chunk_size (`int`, *optional*, defaults to 256):

|

| 126 |

+

Size of chunks for Mamba processing.

|

| 127 |

+

rescale_prenorm_residual (`bool`, *optional*, defaults to `True`):

|

| 128 |

+

Whether to rescale the pre-normalization residual connections.

|

| 129 |

+

"""

|

| 130 |

+

|

| 131 |

+

model_type = "nemotron_h"

|

| 132 |

+

keys_to_ignore_at_inference = ["past_key_values"]

|

| 133 |

+

|

| 134 |

+

def __init__(

|

| 135 |

+

self,

|

| 136 |

+

vocab_size=131072,

|

| 137 |

+

tie_word_embeddings=False,

|

| 138 |

+

hidden_size=4096,

|

| 139 |

+

intermediate_size=21504,

|

| 140 |

+

num_hidden_layers=52,

|

| 141 |

+

hybrid_override_pattern="M-M-M-M*-M-M-M-M-M*-M-M-M-M-M*-M-M-M-M-M*-M-M-M-M-M-",

|

| 142 |

+

num_attention_heads=32,

|

| 143 |

+

#attention_head_dim=128,

|

| 144 |

+

head_dim=128,

|

| 145 |

+

num_key_value_heads=8, # nemo: num_query_groups

|

| 146 |

+

mlp_hidden_act="relu2",

|

| 147 |

+

attention_bias=False,

|

| 148 |

+

mlp_bias=False,

|

| 149 |

+

use_bias=False,

|

| 150 |

+

initializer_range=0.02, # nemo: init_method_std

|

| 151 |

+

layer_norm_epsilon=1e-5, # nemo: layernorm_epsilon

|

| 152 |

+

residual_in_fp32=False, # Megatron Core default value

|

| 153 |

+

use_cache=True,

|

| 154 |

+

num_logits_to_keep=1,

|

| 155 |

+

pad_token_id=0,

|

| 156 |

+

bos_token_id=1,

|

| 157 |

+

eos_token_id=2,

|

| 158 |

+

sliding_window=None,

|

| 159 |

+

max_position_embeddings=4096,

|

| 160 |

+

attention_dropout=0.0,

|

| 161 |

+

hidden_dropout=0.0, # * ADDED

|

| 162 |

+

use_mamba_kernels=True,

|

| 163 |

+

ssm_state_size=128, # mamba_state_size

|

| 164 |

+

mamba_num_heads=128,

|

| 165 |

+

mamba_n_groups=8, # nemo: mamba_ssm_ngroups = num_heads

|

| 166 |

+

mamba_head_dim=64,

|

| 167 |

+

mamba_d_conv=4,

|

| 168 |

+

mamba_expand=2,

|

| 169 |

+

mamba_hidden_act="silu",

|

| 170 |

+

mamba_dt_min=0.001,

|

| 171 |

+

mamba_dt_max=0.1,

|

| 172 |

+

mamba_dt_limit=(0.0, float("inf")),

|

| 173 |

+

mamba_dt_init_floor=1e-4,

|

| 174 |

+

mamba_conv_bias=True,

|

| 175 |

+

mamba_proj_bias=False,

|

| 176 |

+

mamba_chunk_size=256,

|

| 177 |

+

rescale_prenorm_residual=True,

|

| 178 |

+

**kwargs,

|

| 179 |

+

):

|

| 180 |

+

self.vocab_size = vocab_size

|

| 181 |

+

self.tie_word_embeddings = tie_word_embeddings

|

| 182 |

+

self.hidden_size = hidden_size

|

| 183 |

+

self.intermediate_size = intermediate_size

|

| 184 |

+

self.num_hidden_layers = num_hidden_layers

|

| 185 |

+

self.hybrid_override_pattern = hybrid_override_pattern

|

| 186 |

+

self.num_attention_heads = num_attention_heads

|

| 187 |

+

#self.attention_head_dim = attention_head_dim

|

| 188 |

+

self.head_dim = head_dim

|

| 189 |

+

self.sliding_window = sliding_window

|

| 190 |

+

self.max_position_embeddings = max_position_embeddings

|

| 191 |

+

self.attention_dropout = attention_dropout

|

| 192 |

+

self.hidden_dropout = hidden_dropout

|

| 193 |

+

|

| 194 |

+

# Validate hybrid_override_pattern

|

| 195 |

+

# M: Mamba2, *: Attention, -: MLP

|

| 196 |

+

assert len(self.hybrid_override_pattern) == self.num_hidden_layers, "hybrid_override_pattern must have the same length as num_hidden_layers"

|

| 197 |

+

assert re.match(r"^[*-M]+$", self.hybrid_override_pattern), "hybrid_override_pattern must only contain characters 'M', '*', or '-'"

|

| 198 |

+

|

| 199 |

+

# for backward compatibility

|

| 200 |

+

if num_key_value_heads is None:

|

| 201 |

+

num_key_value_heads = num_attention_heads

|

| 202 |

+

|

| 203 |

+

self.num_key_value_heads = num_key_value_heads

|

| 204 |

+

self.mlp_hidden_act = mlp_hidden_act

|

| 205 |

+

self.attention_bias = attention_bias

|

| 206 |

+

self.mlp_bias = mlp_bias

|

| 207 |

+

self.use_bias = use_bias

|

| 208 |

+

self.initializer_range = initializer_range

|

| 209 |

+

self.layer_norm_epsilon = layer_norm_epsilon

|

| 210 |

+

self.residual_in_fp32 = residual_in_fp32

|

| 211 |

+

|

| 212 |

+

self.use_cache = use_cache

|

| 213 |

+

self.num_logits_to_keep = num_logits_to_keep

|

| 214 |

+

|

| 215 |

+

self.use_mamba_kernels = use_mamba_kernels

|

| 216 |

+

self.n_groups = mamba_n_groups

|

| 217 |

+

self.mamba_head_dim = mamba_head_dim

|

| 218 |

+

self.ssm_state_size = ssm_state_size

|

| 219 |

+

self.mamba_num_heads = mamba_num_heads

|

| 220 |

+

self.conv_kernel = mamba_d_conv

|

| 221 |

+

self.expand = mamba_expand

|

| 222 |

+

self.mamba_hidden_act = mamba_hidden_act

|

| 223 |

+

self.time_step_min = mamba_dt_min

|

| 224 |

+

self.time_step_max = mamba_dt_max

|

| 225 |

+

self.time_step_limit = mamba_dt_limit

|

| 226 |

+

self.time_step_floor = mamba_dt_init_floor

|

| 227 |

+

self.use_conv_bias = mamba_conv_bias

|

| 228 |

+

self.mamba_proj_bias = mamba_proj_bias

|

| 229 |

+

self.chunk_size = mamba_chunk_size

|

| 230 |

+

self.rescale_prenorm_residual = rescale_prenorm_residual

|

| 231 |

+

|

| 232 |

+

super().__init__(

|

| 233 |

+

pad_token_id=pad_token_id,

|

| 234 |

+

bos_token_id=bos_token_id,

|

| 235 |

+

eos_token_id=eos_token_id,

|

| 236 |

+

tie_word_embeddings=tie_word_embeddings,

|

| 237 |

+

**kwargs,

|

| 238 |

+

)

|

| 239 |

+

|

| 240 |

+

@property

|

| 241 |

+

def layers_block_type(self):

|

| 242 |

+

return [

|

| 243 |

+

"mamba" if self.hybrid_override_pattern[i] == "M" else

|

| 244 |

+

"attention" if self.hybrid_override_pattern[i] == "*" else "mlp"

|

| 245 |

+

for i in range(self.num_hidden_layers)]

|

explainability.md

ADDED

|

@@ -0,0 +1,14 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

| Field | Response |

|

| 2 |

+

| :---- | :---- |

|

| 3 |

+

| Intended Task/Domain: | Text generation, reasoning, and chat |

|

| 4 |

+

| Model Type: | Text-to-text Mamba2-Transformer Hybrid |

|

| 5 |

+

| Intended Users: | Generative AI creators working with conversational AI models and image content. |

|

| 6 |

+

| Output: | Text |

|

| 7 |

+

| Tools used to evaluate datasets to identify synthetic data and ensure data authenticity. | We used a Gemma-3 4B-based filtering model fine-tuned on [Nemotron Content Safety Dataset v2](https://huggingface.co/datasets/nvidia/Aegis-AI-Content-Safety-Dataset-2.0) to ensure the quality of synthetic data. |

|

| 8 |

+

| Describe how the model works: | Generates text by predicting the next word or token based on the context provided in the input sequence using multiple self-attention layers. |

|

| 9 |

+

| Name the adversely impacted groups this has been tested to deliver comparable outcomes regardless of: | Not Applicable |

|

| 10 |

+

| Technical Limitations & Mitigation: | The model demonstrates weakness to alignment-breaking attacks. Users are advised to deploy language model guardrails alongside this model to prevent potentially harmful outputs. The Model may generate answers that are inaccurate, omit key information, or include irrelevant or redundant text. |

|

| 11 |

+

| Verified to have met prescribed NVIDIA quality standards: | Yes |

|

| 12 |

+

| Performance Metrics: | Accuracy, Throughput, and User-side throughput |

|

| 13 |

+

| Potential Known Risks: | The model was optimized explicitly for instruction following and as such is more susceptible to prompt injection and jailbreaking in various forms as a result of its instruction tuning. This means that the model should be paired with additional rails or system filtering to limit exposure to instructions from malicious sources \-- either directly or indirectly by retrieval (e.g. via visiting a website) \-- as they may yield outputs that can lead to harmful, system-level outcomes up to and including remote code execution in agentic systems when effective security controls including guardrails are not in place. The model was trained on data that contains toxic language and societal biases originally crawled from the internet. Therefore, the model may amplify those biases and return toxic responses especially when prompted with toxic prompts. The model may generate answers that may be inaccurate, omit key information, or include irrelevant or redundant text producing socially unacceptable or undesirable text, even if the prompt itself does not include anything explicitly offensive. |

|

| 14 |

+

| Licensing: | [NVIDIA Open Model License Agreement](https://www.nvidia.com/en-us/agreements/enterprise-software/nvidia-open-model-license/) |

|

generation_config.json

ADDED

|

@@ -0,0 +1,11 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_from_model_config": true,

|

| 3 |

+

"bos_token_id": 1,

|

| 4 |

+

"eos_token_id": [

|

| 5 |

+

2,

|

| 6 |

+

11,

|

| 7 |

+

12

|

| 8 |

+

],

|

| 9 |

+

"pad_token_id": 0,

|

| 10 |

+

"transformers_version": "4.51.3"

|

| 11 |

+

}

|

modeling_nemotron_h.py

ADDED

|

@@ -0,0 +1,1638 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|